- Citations

Recently, the use of mobile devices such as smartphones and tablets has been increasing. Mobile devices collect and gather a variety of different types of data, including data provided by users such as images, voices, and texts. The accumulated data on mobile devices are beneficial for Machine Learning (ML), which demonstrates good performance when there is a significant amount of data.

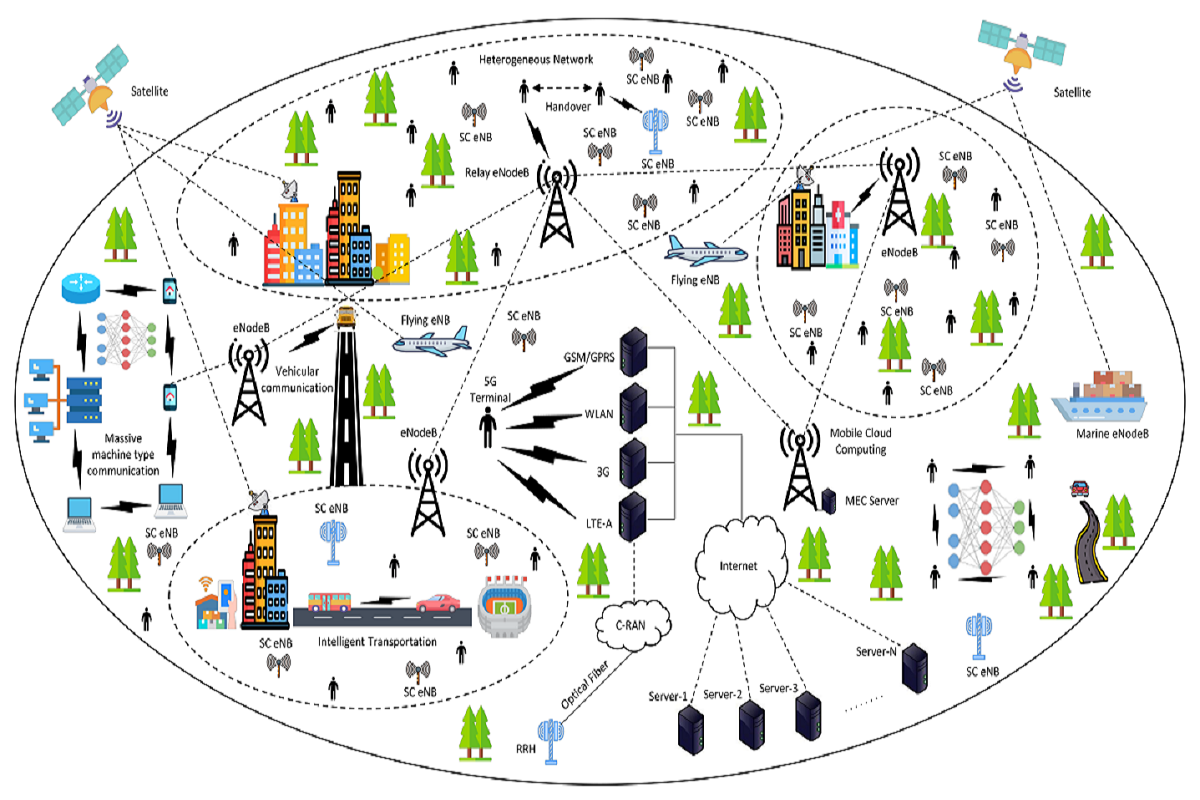

To construct a successful ML model, especially one based on edge devices such as mobile, it is required to decrease the quantity of the gathered data, reinforce its security, decrease the number of training parameters (weights), and handle operating within an unstable network environment. Research on federated learning (FL) has been steadily progressing to solve these problems and be able to use the vast amount of data on mobile devices. This project focuses on minimizing network communication expenses through the usage of optimization algorithm in the communication operation of FL.

Machine learning (ML) is a type of artificial intelligence (AI) that allows software applications to become more accurate at predicting outcomes without being explicitly programmed to do so. Machine learning algorithms use historical data as input to predict new output values.

FL is a machine learning (ML) technique that uses decentralized data to train ML models. FL ensures data privacy by preventing the transfer of data from local machines such as personal devices to a central server. It also cuts down on communication costs because it sends only recently trained model parameters to the server instead of a large amounts of source data. sizes, and computational constraints.